SEO is still the foundation of AI visibility. It is no longer the ceiling. In July 2025, the Pew Research Center analyzed 68,000 real Google searches and found that users clicked an organic result 8% of the time when an AI Overview appeared, compared to 15% when one did not, and clicked a cited source inside the AI Overview itself only 1% of the time (Pew Research, July 2025). In a separate analysis of 1.4 million ChatGPT prompts, Ahrefs found that only 12% of URLs cited by ChatGPT also rank in Google's top 10 for the same query (Ahrefs, 2025). Those two numbers cannot be reconciled by any SEO-only strategy.

This post is for SEO professionals who have heard the "GEO is just SEO" argument and want the data to evaluate it honestly. We will walk through the published research, break down how each major LLM actually selects sources, and explain where the SEO playbook stops working. The conclusion is not that SEO is dead. It is that SEO is the floor, and AI visibility now needs its own ceiling.

TL;DR

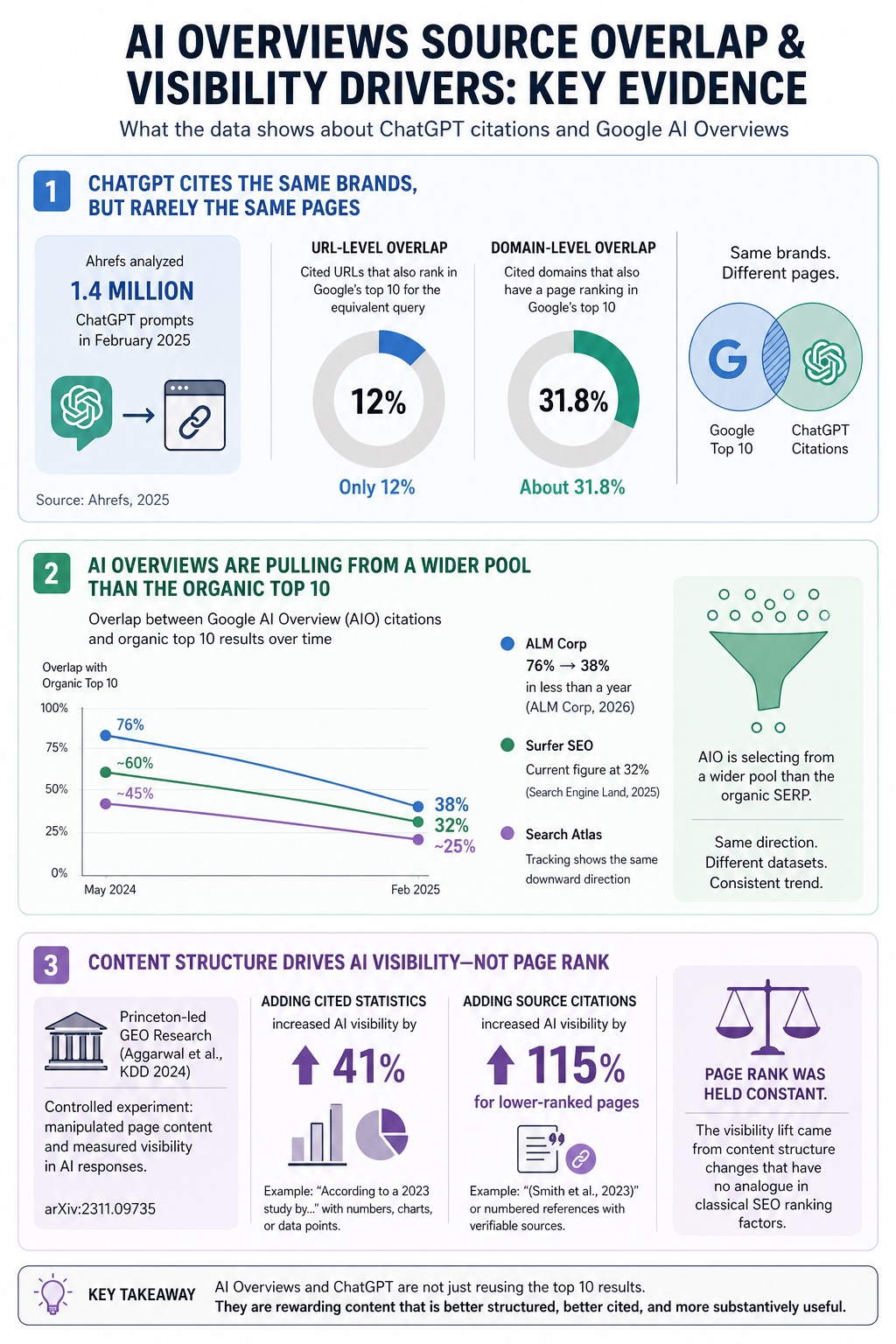

Traditional SEO and AI citation are correlated but not the same system. Multiple independent studies put URL-level overlap between Google rankings and AI citations at 10 to 38 percent depending on platform and query.

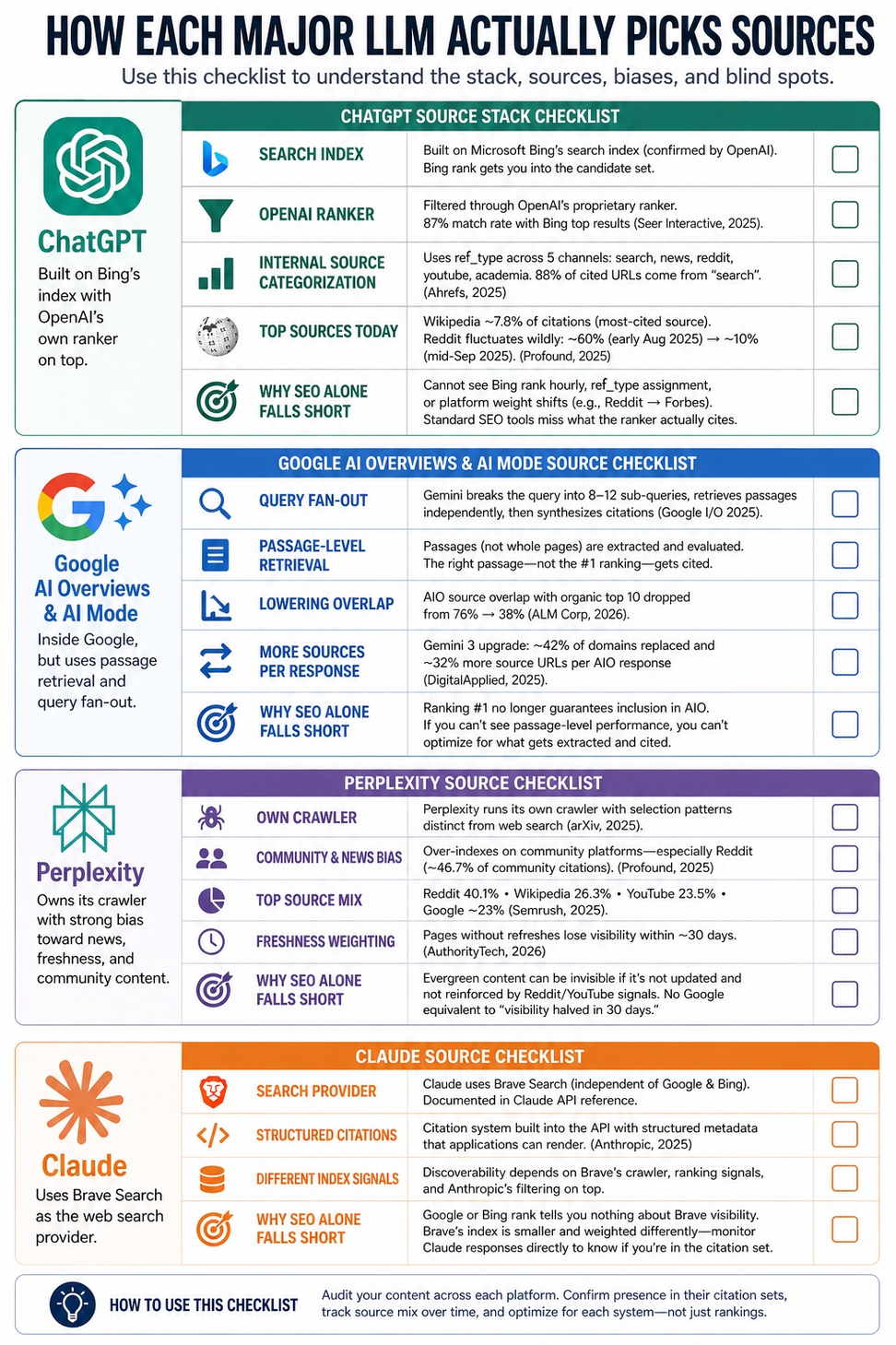

Each major LLM uses a different retrieval stack. ChatGPT layers its own ranker on Bing's index. Google AI Mode uses query fan-out and passage retrieval. Perplexity runs its own crawler with strong news and freshness bias. Claude uses Brave Search. Copilot pulls from Bing with concentrated single-source citations.

Content that ranks #1 organically can still be invisible to LLMs. A Princeton-led research team showed that adding statistics increased AI visibility by 41% and adding citations increased it by 115% for lower-ranked pages, holding rank constant.

AI search referral traffic grew 13x between July 2024 and May 2025, and ChatGPT-referred visitors convert at roughly 11.4% versus 5.3% for organic search. The channel is small but real and high-intent.

"GEO is just SEO" is partially true at the foundation layer (E-E-A-T, schema, crawlability) and partially false at the optimization layer (passage extractability, entity density, multi-platform monitoring). Both can be true at once.

What "SEO is enough" actually claims

The strongest version of the SEO-only argument, articulated by people like Will Scott and echoed in Google's own guidance from John Mueller, is this: AI search is grounded in the same web Google indexes, so if you do good SEO, you will be cited (Search Engine Land, 2025). E-E-A-T, structured content, and topical authority are the same inputs whether the consumer is a Googlebot, a Gemini summarizer, or a ChatGPT retrieval agent.

That is correct in spirit and wrong in operation. The foundation is shared. The selection layer on top of it is not. SEO optimizes a page for one ranked list. AI visibility requires the page to survive a retrieval pipeline, an embedding match, a passage extraction step, and an LLM's synthesis judgment, often across five or six different platforms with five or six different rankers.

That is a different problem with a different toolset. Treating it as the same problem is what gets brands invisible.

The data: where SEO and AI citations diverge

Three independent datasets tell the same story from different angles.

Ahrefs analyzed 1.4 million ChatGPT prompts in February 2025 and found that only 12% of cited URLs also rank in Google's top 10 for the equivalent query, while domain-level overlap was about 31.8% (Ahrefs, 2025). ChatGPT cites the same brands Google ranks much of the time, but rarely the same pages.

Search Atlas, ALM Corp, and Surfer SEO have all tracked Google AI Overview source overlap with the organic top 10 over time. ALM Corp reported that AIO citations from top-10 ranked pages dropped from 76% to 38% in less than a year (ALM Corp, 2026). Surfer SEO put the current figure at 32% (Search Engine Land, 2025). The trend is the same direction across every dataset: AIO is selecting from a wider pool than the organic SERP.

The Princeton-led GEO research paper (Aggarwal et al., KDD 2024) ran a controlled experiment manipulating page content and measuring visibility in AI responses. Adding cited statistics increased AI visibility by 41%. Adding source citations increased visibility by 115% for lower-ranked pages (arXiv:2311.09735). Page rank was held constant. The visibility lift came from content structure changes that have no analogue in classical SEO ranking factors.

If SEO ranking and AI citation were the same thing, you would not see those gaps and you would not see those lifts.

Why the gap exists: retrieval is not ranking

Google's classical ranking system takes a query, matches it against an index, and returns a ranked list of pages. Search Generative Experience, AI Mode, and every LLM-backed search system added a step on top of that. They retrieve, then synthesize.

This matters because the synthesis step has its own selection criteria. Mike King, who coined the term "Relevance Engineering" to describe the new discipline, summarized it this way at SEO Week 2025: passage indexing requires optimizing at the passage level, but no traditional SEO tool does that. They all optimize entire pages (iPullRank, 2025).

Lily Ray's deconstruction of the RAG pipeline in her 2025 reflection on AI search makes the same architectural point. LLMs either lean on static training data or trigger a real-time web search for grounding, and the retrieval and ranking inside that grounding pass is opaque to standard SEO instrumentation (Lily Ray, 2025).

The practical implication: a page that wins the SERP can still lose the passage extraction. And a page that loses the SERP can still win the passage extraction. SEO tools cannot see this distinction because they were never designed to.

How each major LLM actually picks sources

This is the section your SEO peers will want to scrutinize. Each platform has a different stack, a different bias, and a different blind spot.

ChatGPT

ChatGPT's web layer is built on top of Bing's search index, confirmed by OpenAI's VP of Engineering and visible in OpenAI's own documentation of the OAI-SearchBot crawler (Search Engine Land, 2025). Seer Interactive measured an 87% match rate between SearchGPT citations and Bing's top organic results (Seer Interactive, 2025).

But that is the input. The output is filtered through OpenAI's own ranker. Ahrefs' 1.4 million prompt study found ChatGPT internally categorizes retrieved sources using a ref_type field across five channels: search, news, reddit, youtube, and academia. Citation rates between them are wildly uneven. The general "search" index dominates. 88% of cited URLs are pulled from that channel (Ahrefs, 2025).

Profound's analysis of roughly 730,000 ChatGPT conversations in late 2025 found Wikipedia is ChatGPT's most-cited source at about 7.8% of citations, with Reddit citation rates fluctuating dramatically (close to 60% in early August 2025 before collapsing to around 10% by mid-September) (Profound, 2025).

Why SEO alone falls short on ChatGPT: Bing rank gets you into the candidate set. The OpenAI ranker decides whether you survive to the citation. Standard SEO tools cannot see Bing rank changes hourly, cannot detect ref_type assignment, and cannot tell you when ChatGPT swapped Reddit weight for Forbes weight in mid-September.

Google AI Overviews and AI Mode

This is the case that most undercuts the "SEO is enough" position, because Google AI Overviews are inside Google. If anywhere should reward classical SEO purely, it is here. It does not.

AI Overviews now appear on roughly 25.8% of US searches as of January 2026, up from 18% in March 2025 (Search Engine Land, 2025). When they appear, click-through rate to organic results drops from 15% to 8% per Pew Research, a 46.7% relative reduction (Pew Research, 2025).

The selection logic is what an SEO professional should care most about. Google AI Mode uses Gemini for passage-level retrieval with what Google publicly calls "query fan-out" at I/O 2025. The system breaks the original query into 8 to 12 sub-queries, retrieves passages independently for each, and synthesizes citations from the passages that best support the answer (Search Engine Land, 2025).

This is why AIO source overlap with the organic top 10 dropped from 76% to 38% (ALM Corp, 2026). SE Ranking's analysis of the Gemini 3 upgrade found that approximately 42% of previously cited domains were replaced after the model upgrade, with about 32% more source URLs per AIO response (DigitalApplied, 2025).

Why SEO alone falls short on AI Overviews: ranking #1 on the SERP no longer guarantees inclusion in the AI Overview above your own ranking. Your H2 might match the user query. The passage three paragraphs down might be what gets extracted and cited. If you cannot see passage-level performance, you cannot optimize for it.

Perplexity

Perplexity is the platform that diverges most sharply from Google's ranking model. It runs its own crawler and applies a strong bias toward news, freshness, and community content. A July 2025 arXiv paper by Kai-Cheng Yang analyzed over 366,000 citations across 65,000 Perplexity responses and characterized AI search systems as "new gatekeepers of the digital information ecosystem" with selection patterns distinct from web search (arXiv, 2025).

Semrush's three-month study of 150,000 AI citations across LLMs found Reddit is the single most-cited domain, referenced in 40.1% of cases. Wikipedia comes in at 26.3%, YouTube at 23.5%, and Google at the same range (Semrush, 2025). Perplexity specifically over-indexes on Reddit at roughly 46.7% of community-platform citations (Profound, 2025).

Perplexity also weights freshness aggressively. Pages without refreshes lose visibility within roughly a 30-day window (AuthorityTech, 2026).

Why SEO alone falls short on Perplexity: evergreen pillar content optimized for backlinks and on-page SEO can be entirely invisible if it is not regularly updated and not surfaced in Reddit or YouTube secondary signals. There is no Google equivalent to "your AI visibility halved because you did not republish in 30 days."

Claude

Anthropic's Claude uses Brave Search as its web search provider, documented in the Claude API reference (Anthropic Docs). Claude's citation system is built into the API, with each search result returning structured citation metadata that the consuming application can render (Anthropic, 2025).

Claude's selection bias is different from ChatGPT's because the underlying index is different. Brave Search is independent of both Google and Bing. That means content discoverability on Claude depends on Brave's crawler, Brave's ranking signals, and Anthropic's filtering on top.

Why SEO alone falls short on Claude: ranking on Google or Bing tells you nothing about your Brave Search visibility. Brave's index is significantly smaller and weighted differently. If you do not monitor Claude responses directly, you cannot tell whether you are present in its citation set.

Microsoft Copilot

Copilot pulls from the Bing index and surfaces citations as inline footnotes. Bing top-10 rank is a strong predictor. Microsoft's algorithm evaluates content freshness, domain authority, and semantic relevance for source selection (Pedowitz Group, 2025).

The behavioral pattern that breaks the SEO-only model on Copilot is concentration. Copilot tends to cite one or two sources per response, not the ten Google would link to (Search Influence, 2025). Those one or two sources absorb almost all the visibility.

Why SEO alone falls short on Copilot: "ranking on page one" used to mean ten chances at being clicked. On Copilot, it means competing for the one or two slots a synthesized answer will cite. That is a different optimization problem and the historical SEO tooling does not measure it.

A note on cross-platform coverage

The strongest single argument against "optimize for SEO and you are done" is this Semrush finding: across ChatGPT, Perplexity, and Google AI features, only 11% of domains are cited by both ChatGPT and Perplexity, and only 12% of sources are cited across all three platforms (Semrush, 2025).

That means a brand visible in Google AI Overviews has roughly an 88% chance of being invisible in Perplexity for the same query. SEO can drive Google visibility. It cannot drive cross-platform AI visibility because the platforms do not share the same selection logic.

If your buyer is asking ChatGPT today and Perplexity tomorrow and Claude next week, you need a tool that tells you where you appear and where you do not. You cannot infer it from Search Console.

Engaging the strongest counter-argument

The most respected pushback comes from Aleyda Solis, who has consistently and correctly pointed out that the AI search shift is real but smaller than the noise suggests. Her September 2025 data point: ChatGPT had 5.8B visits in August 2025 versus Google's 83.8B. LLMs still drive less than 5% of revenue at most sites, while SEO drives over 50% (Aleyda Solis on X, 2025).

She is right about the current snapshot. She is also right that ChatGPT crossed 800 million weekly active users in October 2025, up from 500 million in March, a 60% jump in seven months (TechCrunch, October 2025). That is the part of the curve to plan around.

That is true and it is the right number to start with. The conclusion that follows from it is not "ignore AI search." It is "do not abandon SEO for AI search." Those are different.

Three observations on top of Aleyda's framing:

AI referral traffic is small but compounding fast. Adobe measured a 13x increase from July 2024 to May 2025, with retail up 693% YoY and travel up 33x (Adobe, 2025). Small base, rapid compounding.

The traffic that does come through converts dramatically better. Similarweb's 2025 ecommerce data shows ChatGPT-referred traffic converts at 11.4% versus 5.3% for organic search (Similarweb, 2025). The unit economics flip the math even at small volumes.

The relevant metric is not "how many users search on ChatGPT" but "how many of your buyers do." B2B SaaS, financial services, and high-consideration purchases skew far higher than the population baseline. Pew's data shows informational queries are most exposed to AI Overviews, and informational queries are exactly where most B2B purchase research happens.

The SEO-purist conclusion misses this because it weights SEO's current revenue contribution against AI search's current revenue contribution. The right comparison is SEO's current revenue against AI search's projected revenue at the trajectory it is actually on. Stripe's CFO does not run her budget against last quarter. She runs it against the next four.

What "specialized tooling" actually means

If you accept that AI visibility is correlated with but distinct from SEO, the next question is what monitoring infrastructure to add. Here is the honest answer.

Traditional SEO tools (Ahrefs, Semrush, Search Console) were built around a one-query, one-ranked-list model. They tell you where you rank and who links to you. They do not tell you:

Whether your brand appears in ChatGPT responses for your target queries

Which passages are being extracted by Google AI Mode versus which pages

How your share of voice on Perplexity compares to your competitors week over week

Whether Claude is citing you at all (Brave-indexed, separate from Google)

Whether Copilot is consolidating its citation to a single competitor

You need a dedicated AI visibility layer for those things. There are now ten or so tools in this category, with different tradeoffs around platform coverage, pricing, and integration depth.

Capability | Traditional SEO Tool | AI Visibility Tool |

|---|---|---|

Google organic rank tracking | Yes | Usually no |

Backlink analysis | Yes | No |

ChatGPT citation tracking | No | Yes |

Perplexity share of voice | No | Yes |

Google AI Overview source presence | Limited | Yes |

Claude / Copilot monitoring | No | Yes (varies) |

Passage-level AIO insight | No | Yes (some) |

Cross-platform competitor benchmarking | No | Yes |

The two stacks are complementary, not substitutes. SEO tools tell you about the SERP. AI visibility tools tell you about the synthesized answer that increasingly sits above the SERP.

What we built and how to think about it

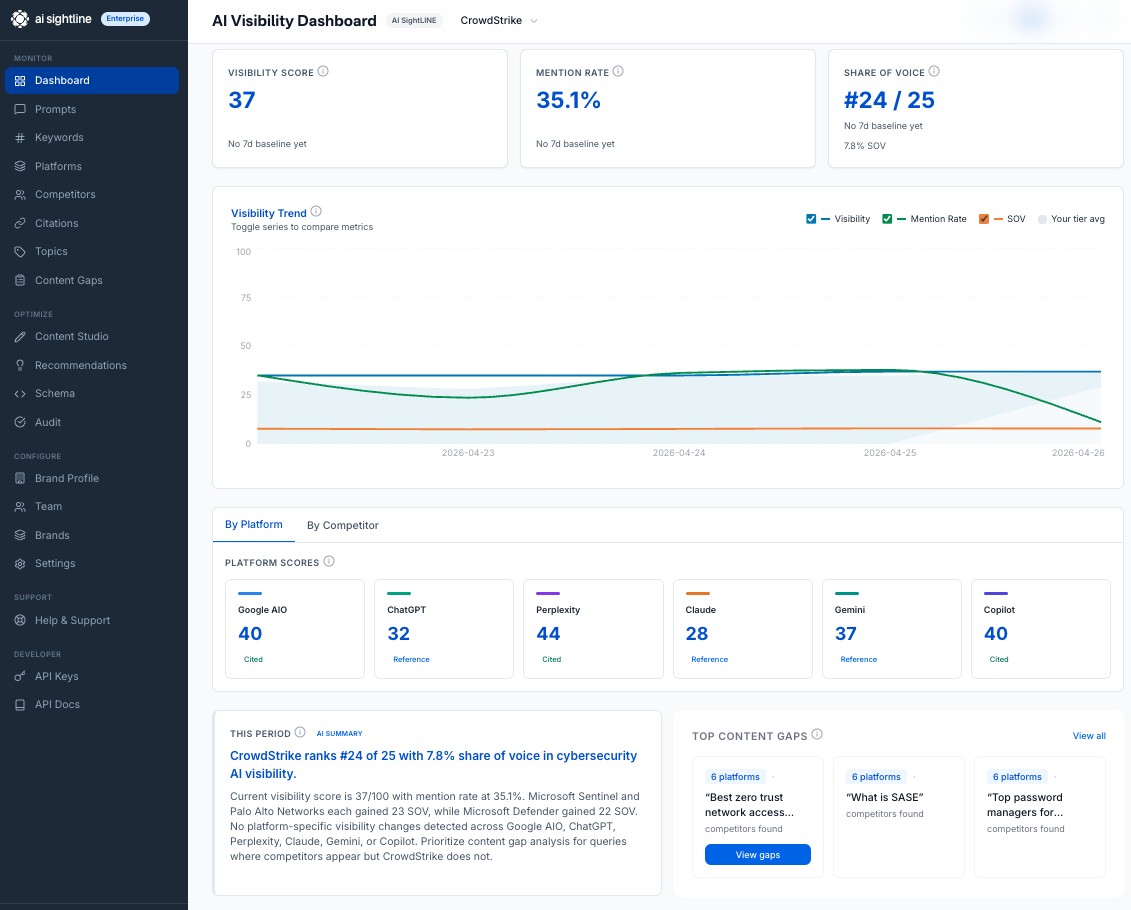

AI Sightline is the AI visibility tool we built for the gap described above. It monitors six AI platforms (ChatGPT, Google AI Overviews, Perplexity, Claude, Copilot, Gemini) on a daily cadence, tracks share of voice against competitors, surfaces specific recommendations tied to Princeton-style content interventions, and exposes everything through both a REST API and an MCP server so AI agents can query your visibility data directly.

Pricing: Free for 3 prompts and 2 platforms, Starter at $29.95/mo for 4 platforms, Pro at $64.95/mo for 5 platforms and full API access, Business at $139.95/mo for 6 platforms and 25 brands. The full breakdown is on the pricing page.

We are not the only tool in this space. Profound is excellent at the enterprise tier. Peec, Otterly, and Scrunch each have their own positioning. The point is not "use AI Sightline." The point is that you need something in this category if you want to know whether SEO has stopped being enough for your specific buyers and your specific queries. You cannot answer that question with Search Console.

The bottom line for SEO professionals

SEO is the floor. It always was. The argument was never that ranking on Google is unimportant. The argument is that ranking on Google is the entry condition, not the exit condition.

The data from Pew, Ahrefs, Princeton, Semrush, Adobe, Similarweb, and Profound, gathered independently across 2024 and 2025, points the same direction:

Click rates from Google are dropping when AI Overviews appear.

URL-level overlap between Google rankings and AI citations is between 12% and 38% depending on platform.

AI referral traffic is small but compounding 10x year over year and converting at 2x to 3x the rate of organic.

The five major LLMs use different retrieval stacks with different selection biases. Cross-platform coverage from a single source is unusual.

Specific content interventions (statistics, citations, passage structure) move AI visibility independent of SERP rank.

A professional who ignores these signals because "GEO is just SEO" is making the same call that "mobile is just desktop" looked like in 2010. The foundation is shared. The optimization layer is not.

If you want to see what your brand looks like in actual LLM responses today, you can run a free AI visibility scan in about 30 seconds. No credit card. The point is not to sell you a tool, it is to give you the data to run your own argument.

Sources and further reading

Pew Research Center: Google users are less likely to click on links when an AI summary appears (July 2025)

Ahrefs: ChatGPT may scrape Google, but the results don't match

Ahrefs: Why ChatGPT cites one page over another (1.4M prompts)

Aggarwal et al.: GEO: Generative Engine Optimization (Princeton, KDD 2024)

Yang: Citation patterns in AI search systems (arXiv, July 2025)

ALM Corp: Google AI Overview citations from top-10 dropped from 76% to 38%

Search Engine Land: How different AI engines generate and cite answers

Seer Interactive: 87% of SearchGPT citations match Bing top results

TechCrunch: Sam Altman says ChatGPT has hit 800M weekly active users

SE Ranking analysis of Gemini 3 AIO impact (via DigitalApplied)

Search Influence: Inside Bing's AI performance report (20K Copilot citations)

Get your free AI visibility score.

See how ChatGPT, Claude, Perplexity, Gemini, Google AIO, and Copilot talk about your brand.

Start freeSolo founder building AI visibility monitoring. Ships weekly. No venture capital, a lot of opinions about where AI search is going.