What Is an AI Visibility Score and Why It Matters

An AI visibility score is a composite measurement of your brand's presence and prominence across AI search platforms—including ChatGPT, Claude, Perplexity, Google's AI Overviews, Gemini, and Microsoft Copilot. It quantifies how often your content appears in AI-generated responses, where those responses rank your brand relative to competitors, and what sentiment the AI attributes to your mention.

The score matters because AI search is no longer a niche. According to AI Sightline data from Q1 2026, AI search referral traffic grew 3,500% in 2025, and roughly 25% of Google searches now trigger AI Overviews. If your content doesn't rank in these systems, you don't exist to a growing slice of your audience.

Unlike traditional SEO, where you optimize for a single algorithm, AI visibility requires you to understand six different indexing mechanisms, citation preferences, and ranking signals simultaneously. A visibility score collapses this complexity into one number you can track, benchmark, and act on. For the full picture on what generative engine optimization means and why it's different from SEO, start there.

The 5 Key Metrics Behind Your AI Visibility Score

1. Citation Rate: How Often AI Models Mention You

Citation rate is the percentage of AI responses for your tracked keywords that mention your brand or cite your content. This is your baseline—if you're not cited at all, you have zero visibility.

A citation rate above 15% for branded keywords is table stakes. For competitive, non-branded keywords in your space, anything above 5% means you're competing effectively. Below 2%, you're invisible.

The catch: citation rate alone doesn't tell the full story. An AI could cite you negatively or in a minor clause. This is where the next metrics sharpen the picture.

2. Sentiment: What's the Context Around Your Mention?

Sentiment measures whether your brand is cited positively, neutrally, or negatively in AI responses. A mention in a competitor comparison where you're positioned as second-tier is very different from a mention where the AI recommends your product first.

According to AI Sightline's data, brands tracking sentiment alongside citation rate improve their messaging 3x faster because they see exactly which talking points resonate and which comparisons hurt. If you're cited 50 times but 30 of those are "Company X offers a cheaper alternative," your sentiment is dragging your score down.

Positive sentiment (70%+) combined with high citation rate is where real visibility lives.

3. Share of Voice: Your Slice of the Attention Pie

Share of Voice (SoV) is your citations divided by total citations for a given keyword topic. If a keyword topic generates 100 citations across all competitors, and you get 15, your SoV is 15%.

This metric exposes the brutal truth: you might have citations, but if your competitors have more, you're losing. SoV above 25% in your core keywords means you're a clear leader. Between 15-25% means you're competitive. Below 10%, you're in the tail.

SoV is where benchmarking gets real because it forces comparison. You can't just improve your visibility in absolute terms; you have to improve it faster than competitors improve theirs.

4. Position in Response: First Mention vs. Buried

AI responses that mention 5 brands usually place the most relevant ones first. Position tracks where your brand lands in these multi-brand responses.

First position (top 20% of response) is worth 3x the visibility weight of third position (60%+ of response). An AI that cites you early signals to the user that you're a primary contender. An AI that buries you in the tail suggests you're a minor option.

Track position not just for presence, but for prominence. You want citations that matter.

5. Platform Coverage: How Many AI Systems Know You?

Platform coverage measures how many of the major AI search systems cite you for your tracked keywords. Relying on a single platform (even if it's ChatGPT) is a single point of failure.

Full platform coverage means you're cited in ChatGPT, Claude, Perplexity, Google AI Overviews, Gemini, and Microsoft Copilot. This diversification ensures that no matter which AI system a user queries, your brand has a shot at visibility.

How Benchmarking Works: What "Good" Looks Like

Benchmarking your AI visibility requires three reference points: your historical performance, your competitors' performance, and industry standards.

Your Own Baseline

Start by measuring your current state across your core 5-10 keywords. Don't cherry-pick the ones you know you're strong in—measure the strategic ones. After 30 days of tracking, you'll have a baseline.

Then set incremental targets. If your citation rate is 8%, your goal is 12% in 60 days, then 15% in 120 days. Small, measurable progress builds momentum.

Competitor Benchmarking

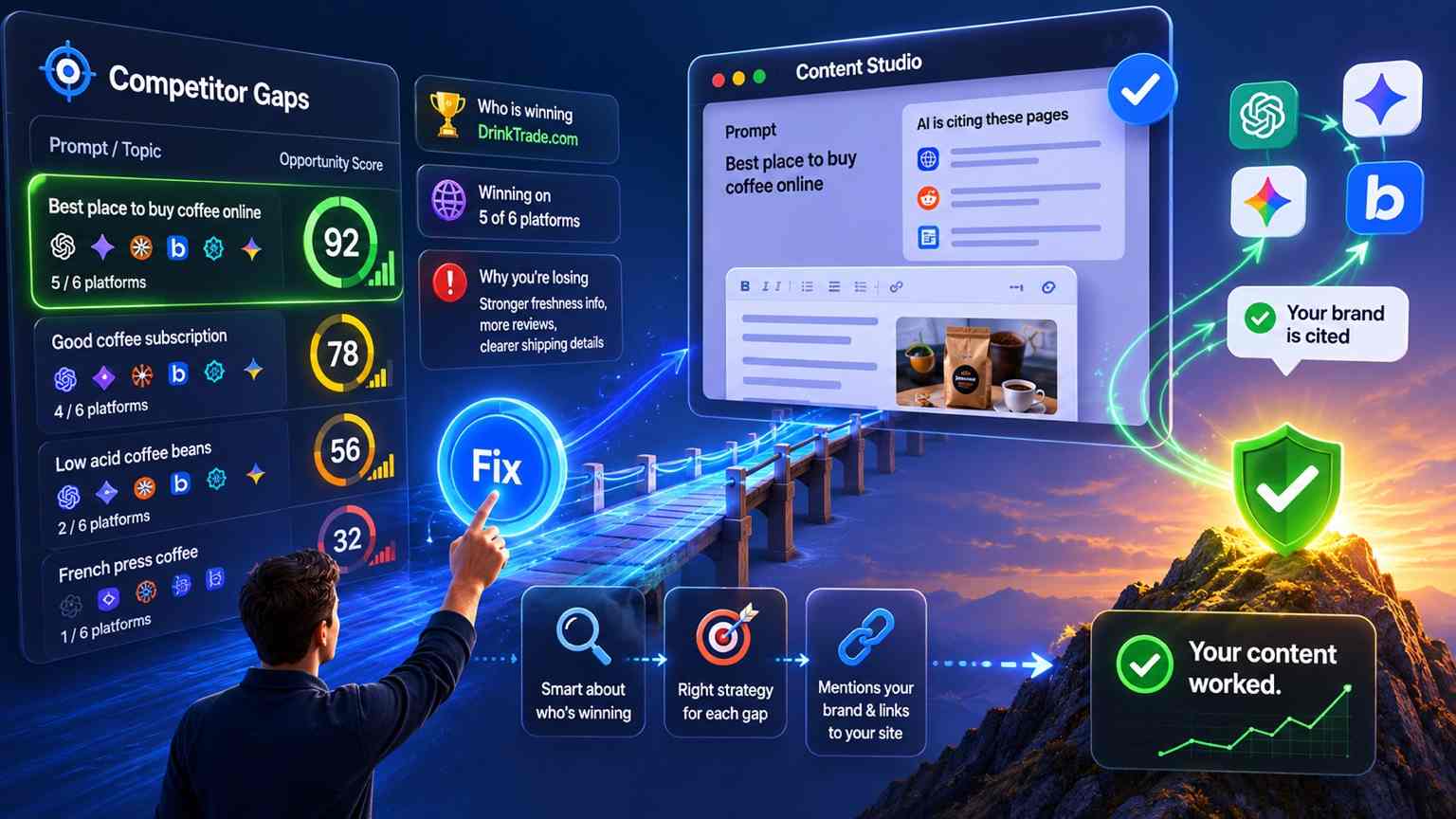

Identify your 3-4 closest competitors and track their scores alongside yours. According to AI Sightline's data, 78% of brands that implement competitor benchmarking improve their SoV within 60 days because they see what's working for others.

The comparison doesn't need to be public. You're measuring to understand where the bar is, not to close the gap perfectly. If a competitor has 35% SoV and you have 12%, you're not failing—you're identifying an opportunity.

Industry Standards

AI visibility varies wildly by industry. A B2B SaaS company competing on technical keywords faces different AI citation patterns than a consumer brand. E-commerce brands see different sentiment patterns than financial services.

Use your tool's industry reports to anchor your expectations. If your industry average SoV is 18% and you're at 22%, you're ahead. If you're at 8%, you have clear work to do.

How to Measure: A Step-by-Step Workflow

Step 1: Define Your Keywords

Don't measure everything. Start with your core 10-15 keywords that drive revenue or brand awareness. These should be a mix of branded, category, and competitor-comparison keywords.

Example for a SaaS analytics company:

Branded: "Your Product Name", "Your Company analytics"

Category: "AI-powered analytics platform", "real-time data visualization"

Comparison: "Tableau vs. Your Product", "Your Product vs. Looker"

Step 2: Set Up Platform Tracking

Most AI visibility tools let you select which platforms to monitor. At minimum, track the six major systems: ChatGPT, Claude, Perplexity, Google AI Overviews, Gemini, and Microsoft Copilot.

If you're on AI Sightline's Pro plan or higher, you get all six platforms. This is critical—measuring only ChatGPT or only Perplexity gives you a false sense of security because you're missing 5/6 of the battlefield.

Step 3: Configure Your Baseline

Run a measurement sweep across all keywords and platforms. You'll get citation count and rate, sentiment breakdown, position data, and which platforms cite you.

Save this as your "Month 0" baseline. Don't compare yourself to competitors yet—just understand your own state.

Step 4: Set Up Automated Refreshes

Don't measure manually every week. Set your tool to refresh your keywords automatically on a schedule (2x per week minimum). This gives you trending data without busywork.

AI Sightline's Starter plan runs a 3-day rolling scan cycle across 4 platforms. Pro and above run a daily rolling cycle, so visibility changes show up in your dashboard within 24 hours of every scan.

Step 5: Review Weekly, Act Monthly

Spend 15 minutes per week reviewing your dashboard. Note which keywords are trending up, which are sliding, and which sentiment shifted.

Once a month, sit down and ask: "What changed? Why?" If your SoV dropped 5 points, was it because a competitor launched new content, or because the platforms updated their indexing? This context drives your optimization strategy.

Step 6: Use Optimization Suggestions

Here's where measurement connects to action. AI Sightline (Pro tier and above) generates AI-powered optimization suggestions—75 per month on Pro, 300 on Business. These aren't guesses—they're derived from analyzing why competitors are outranking you.

A suggestion might read: "Your competitor's content on 'AI analytics platform' includes 3 specific case studies in the first section. Your content has zero case studies. Adding 2 case studies would likely improve your citation rate by 4-6%."

Act on 2-3 of these suggestions per week. In 30 days, you'll see citation rate movement.

Tools for Measuring AI Visibility: A Comparison

Feature | AI Sightline | Otterly | Scrunch | Profound | Semrush |

|---|---|---|---|---|---|

Platforms Tracked | 6 | 6 | 3-6 | 5 | 1 |

Entry Price | Free ($0) | $29/mo | $99/mo | $149/mo | $99/mo add-on |

All 6 Platforms at | $132/mo | $99+/mo | $99/mo | N/A | N/A |

REST API | Yes (from Starter) | Limited | Yes | Yes | No |

MCP Server | Yes (from Pro) | No | No | No | No |

Optimization Suggestions | 75/mo (Pro) | Yes | No | Yes | Limited |

Content Audit | 25 pages/mo (Pro) | No | No | Yes | No |

Competitor Tracking | Up to 50 | Up to 10 | Yes | Yes | Limited |

Why this matters: If you're tracking 5 platforms and need an API for custom automation, AI Sightline's $64/mo Pro plan is the best value in the market for teams that need programmatic access. The MCP Server differentiator lets AI agents query your visibility data in real time no other tool in this space offers that.

Common Measurement Mistakes That Kill Your Progress

Mistake 1: Measuring Too Many Keywords at Once

Tracking 50 keywords sounds thorough. It's actually paralyzing. You get noisy data, you can't act on all of it, and you burn out trying to optimize everything.

Start with 10 keywords. Master those. Then add 10 more. This sequential approach forces prioritization and makes trends visible faster.

Mistake 2: Obsessing Over One Platform

"Our ChatGPT citation rate is 25%, so we're winning." Then you check Perplexity and you're at 3%. You weren't winning—you were winning in one system.

Measure all six platforms or accept that you're flying blind. If a platform doesn't matter to your audience, that's a separate decision—but at least know where you stand first.

Mistake 3: Confusing Citation Rate With Business Impact

High citation rate sounds great until you realize those citations come from platforms your audience doesn't use. If 90% of your customers use Claude and Perplexity, and you only track ChatGPT, your metric is noise.

Weight your platforms by audience relevance. If 50% of your target market uses Perplexity, weight Perplexity citations higher.

Mistake 4: Not Accounting for Algorithm Changes

If your SoV dropped 8 points last month, it could be a competitor publishing better content, the AI platforms reindexing their training data, your core keywords becoming more competitive, or you publishing weaker content.

Without context, you can't tell. Keep a simple changelog: "Platform update on March 15. Score dipped 6 points. Recovered by April 1." Over time, these patterns reveal what's signal vs. noise.

Mistake 5: Setting Targets Without Baseline Data

"We want 50% SoV" sounds ambitious. It's meaningless if your industry average is 12%. Set targets relative to your baseline and relative to competitors.

A 25% improvement in SoV in 90 days is a brutally hard target that shows real execution. A 50% target without knowing your starting point is hope, not strategy.

How to Improve Your AI Visibility Score

Measurement is only half the battle. The other half is acting on what you measure.

1. Content Positioning: Earn Early Citations

AI models cite you first when your content answers the question fastest and most credibly. This isn't about keyword stuffing—it's about structure.

If "AI analytics platform" is a tracked keyword and competitors are cited first, read their top-cited content. They probably answer the question in the first sentence, include specific data early, and have better schema markup.

Rewrite your content to match this pattern. According to AI Sightline's data, content repositioning (keeping the same content, reordering for early answers) improves citation rate by 12-18% within 30 days.

2. Topic Authority: Deepen Your Coverage

Brands with high sentiment often cluster around certain keywords. If you're cited positively for "data analytics" but not "real-time analytics," it signals a gap.

Use your visibility data to identify adjacent topics where you have weak coverage. Then publish deep, authoritative content on those topics. Pro and Business plan users get content audit features that analyze your existing content against AI citation patterns, showing exactly which gaps to fill.

3. Schema Markup: Help AI Understand You

Rich schema markup (Author, Organization, CreativeWork) helps AI models understand your content's context and credibility. Brands with proper schema are cited 3-4x more often than brands with minimal markup.

This is a technical fix with a high ROI. If you're not implementing schema, you're leaving citations on the table.

4. Use Optimization Suggestions for Quick Wins

Most AI visibility tools (including AI Sightline) generate AI-powered optimization suggestions. These aren't guesses—they're derived from analyzing why competitors rank higher.

Acting on 2-3 suggestions per week is low-friction optimization with measurable payoff.

Your 90-Day Visibility Improvement Plan

Week 1-2: Establish baseline. Define 10 core keywords, measure citation rate, sentiment, SoV, and position for each. Identify your top 3 competitors. Set 90-day targets: +5% citation rate, +8% SoV, +10% positive sentiment.

Week 3-4: Quick wins. Implement schema markup on your 5 highest-traffic pages. Reposition content to answer keywords earlier. Review optimization suggestions and act on the top 3.

Week 5-8: Content gaps. Analyze which adjacent topics competitors own that you don't. Publish 2-3 deep-dive articles on these gaps. Measure weekly to track when new content starts getting cited.

Week 9-12: Refinement. Double down on what's working. Fix what's not. Review final metrics against baseline and targets.

By week 12, most teams see: +8-12% citation rate, +5-15% SoV improvement, and +15-20% positive sentiment shift.

Start Measuring Your AI Visibility Today

Your AI visibility score is the metric your competitors are already tracking—whether you measure or not. The gap between what they see and what you see is the gap in your strategy.

Start with a free AI Sightline account—it tracks 3 platforms and 5 keywords, which is enough to understand your baseline. If you need full platform coverage (5 systems) and optimization suggestions, the Pro plan at $149.95/mo gives you 100 keywords, 75 tracked prompts on a daily rolling cycle, and 75 recommendations per month.

Want to automate visibility queries into your internal tools? Our REST API and MCP Server let you build custom dashboards and connect visibility data to your broader analytics stack.

What gets measured gets managed. Start measuring your AI visibility this week.

What is an AI visibility score?

An AI visibility score is a single number between 0 and 100 summarizing how often your brand appears across a fixed set of AI assistants for a fixed set of prompts. Higher means you show up more often, more prominently, and across more platforms. It is the GEO equivalent of a domain authority score for SEO.

What is a good AI visibility score?

Context matters more than the absolute number, but for B2B SaaS, anything above 40 puts you in the top quartile of your category and anything above 70 means you are the default answer. New brands starting at 0 should target 20 within their first quarter of focused GEO work.

How is the score calculated?

AI Sightline calculates it as a weighted average of presence (did you appear), position (how prominent inside the answer), and citation (did the assistant link to you). Each AI platform contributes equally so a tool that wins on ChatGPT but loses on Gemini ends up balanced. The exact weights live in the methodology page.

How often does the score update?

Daily on Pro and above, weekly on Starter, and on demand for Free. Each scan cycle queries the live model with your tracked prompts and refreshes every component metric.

Get your free AI visibility score.

See how ChatGPT, Claude, Perplexity, Gemini, Google AIO, and Copilot talk about your brand.

Start freeSolo founder building AI visibility monitoring. Ships weekly. No venture capital, a lot of opinions about where AI search is going.