Every brand monitoring their AI visibility hits the same wall. You open your dashboard, you see the numbers, you know ChatGPT barely mentions you, Google AI Overviews skips you entirely, and Perplexity cites your competitor three times more often. You stare at the data and think: "OK, but what do I actually do about this?"

That question -- the gap between knowing you have an AI visibility problem and knowing how to fix it -- is the reason we built AI Sightline's recommendation engine and impact report. Starting today, AI Sightline doesn't just show you where you stand. It tells you exactly what to fix, ranks fixes by potential impact, and measures whether your changes actually worked.

No more exporting CSVs and guessing. No more paying a consultant to tell you what your own data already knows. Your AI visibility data now talks back.

Why Monitoring Without Recommendations Is a Dead End

Here's the uncomfortable truth about AI visibility monitoring: data without direction is just expensive anxiety.

You track 75 prompts across 5 AI platforms. You watch your visibility score move up 2 points, down 3 points, sideways for a week. You notice a competitor gaining ground on Perplexity. You see your own domain getting cited less on ChatGPT than last month. All useful information. None of it tells you what to do next.

According to AI Sightline's analysis of scan data across active brands, the average user checks their dashboard 3-4 times per week but takes action on their AI visibility less than once per month. The bottleneck isn't awareness. It's knowing which lever to pull.

That's the gap we just closed.

How the Recommendation Engine Works

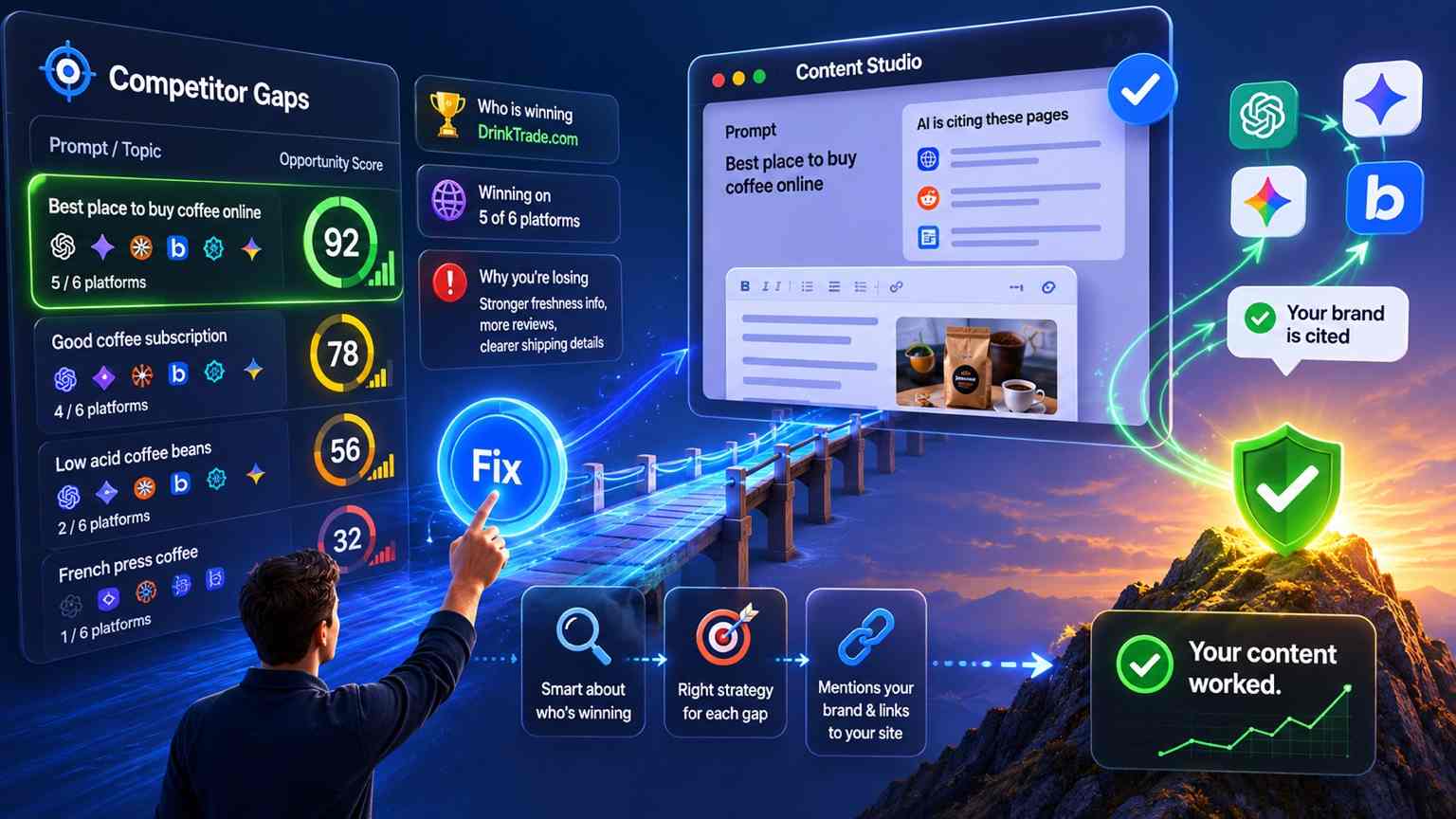

AI Sightline's recommendation engine runs automatically after every scan cycle. It analyzes your visibility data, citation patterns, competitor movements, and content gaps to generate specific, prioritized actions you can take right now.

Every recommendation includes three things: what to do, why it matters, and the estimated impact on your AI visibility. No vague advice like "improve your content strategy." Instead, you get specific calls like "Add schema markup to your /pricing page -- competitors with structured data on pricing pages are cited 2.3x more often in AI responses about your category."

Four Types of Recommendations (With More Coming)

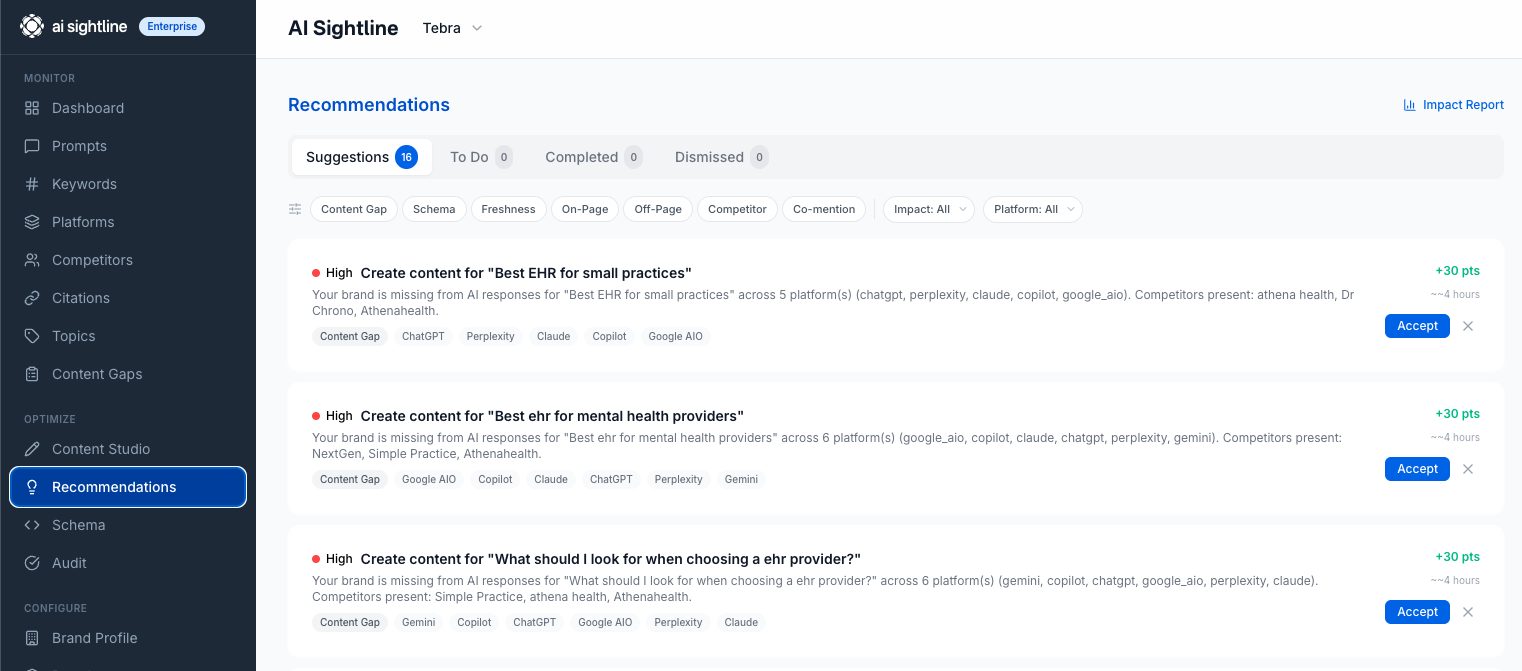

The engine currently generates four types of AI visibility recommendations, each targeting a different gap in your presence:

Content Gap Recommendations. These fire when AI platforms are answering questions in your category but never citing your content. The engine identifies the specific topics where you're invisible and suggests content you should create or optimize. If ChatGPT is answering "best project management tools for remote teams" and pulling from three competitors but never from you, you'll see a recommendation to create or optimize content targeting that exact topic.

Co-Mention Recommendations. When your brand consistently appears alongside specific domains in AI responses, that's a signal. The engine identifies your strongest co-mention relationships -- domains that show up next to you in 70%+ of shared AI responses -- and recommends ways to strengthen those associations or address gaps where competitors have co-mention relationships you don't.

Schema Recommendations. Structured data is one of the most underused levers for AI visibility. The engine checks whether your pages have the schema markup that AI platforms use to extract structured information. Missing JSON-LD, incomplete organization schema, or absent FAQ markup all trigger specific fix recommendations.

Competitor Intelligence Recommendations. When a competitor's visibility score jumps, the engine doesn't just flag it -- it analyzes what changed and recommends a response. If a competitor gained 15 points on Google AI Overviews last week, you'll see a recommendation that breaks down what content they published and what you can do to compete.

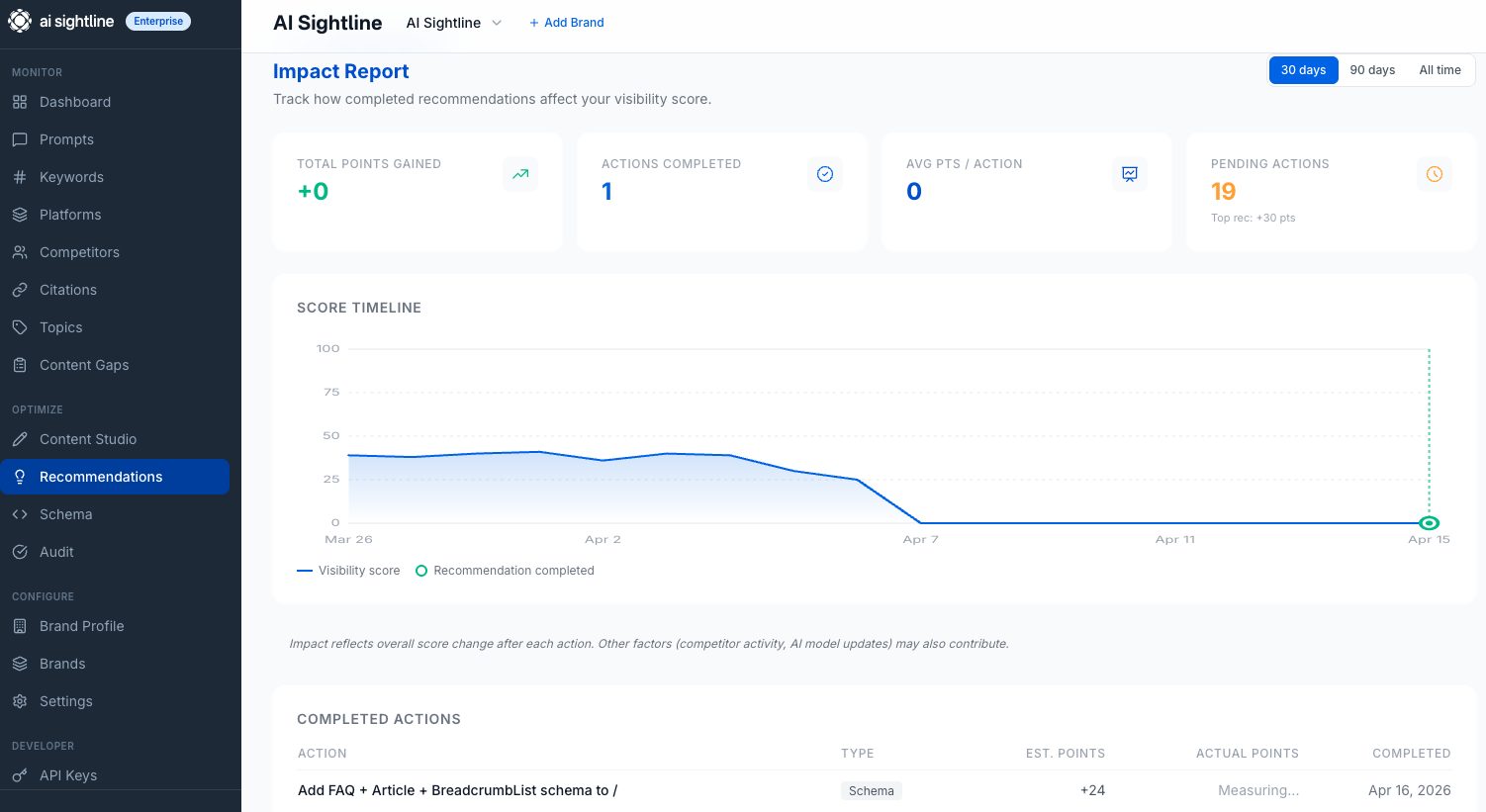

The Impact Report: Proof That Your Work Moved the Needle

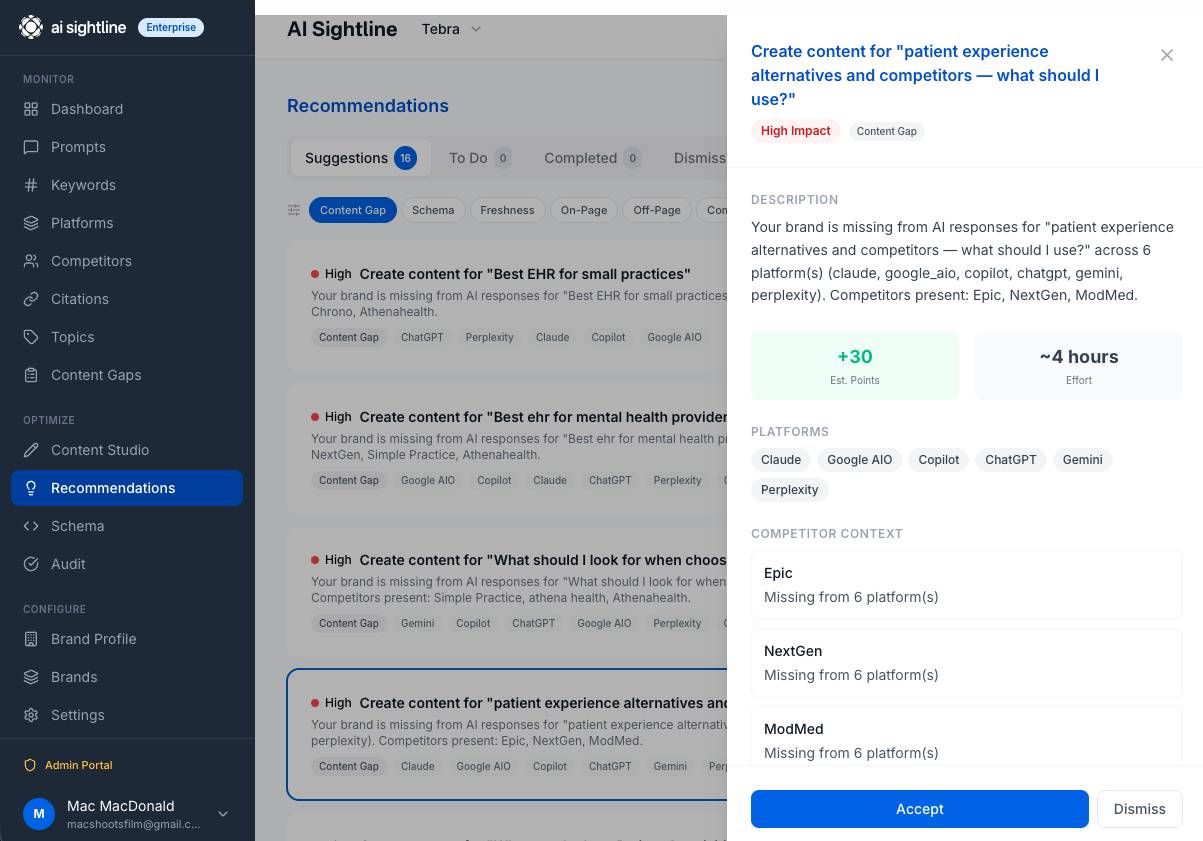

Recommendations are only half the story. The other half is knowing whether following them actually worked.

When you complete a recommendation in AI Sightline, the system takes a snapshot of your visibility metrics at that moment. Over the following scan cycles, it measures what changed. Did your visibility score go up? Did your citation rate improve on the platforms the recommendation targeted? Did the content gap close?

The Impact Report shows estimated impact vs. actual results side by side. No black-box "trust us, it helped" claims. You see the numbers. If a recommendation predicted a 12-point visibility improvement and you got 8, that's what the report shows. Full transparency.

This matters because AI visibility optimization is still new territory for most marketing teams. Nobody has years of institutional knowledge about what works. The impact report builds that knowledge base for you automatically -- every completed recommendation becomes a data point about what moves the needle for your brand on your target platforms.

What This Looks Like in Practice

Let's walk through a real scenario. You're a B2B SaaS company tracking 75 prompts across ChatGPT, Google AI Overviews, Perplexity, Claude, and Gemini on AI Sightline's Pro plan at $149.95/mo.

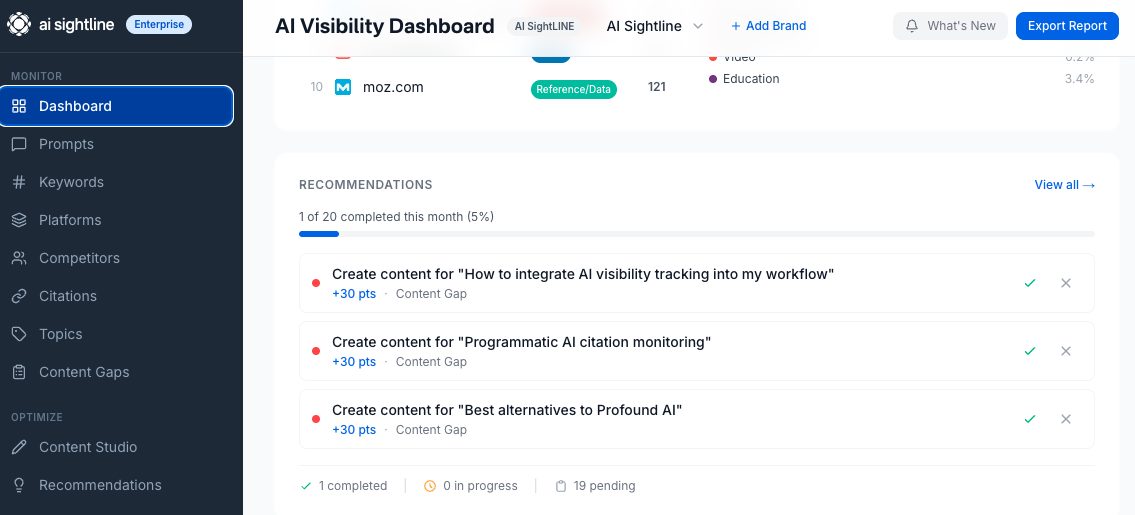

Monday morning. You open your dashboard and see 4 new recommendations in the Recommendations card.

Recommendation 1 (Content Gap, High Impact): "ChatGPT is answering 'best [your category] tools for enterprises' using content from 3 competitors. Your brand isn't cited. Create a comparison page targeting this query with structured feature data and pricing."

Recommendation 2 (Schema, Medium Impact): "Your /features page lacks Product schema markup. Competitors with Product schema on equivalent pages are cited 40% more often in AI responses about feature comparisons."

Recommendation 3 (Competitor Intel, Medium Impact): "Competitor X gained 18 visibility points on Perplexity this week. Their new technical blog post on [topic] is being cited. Consider publishing competing content."

Recommendation 4 (Co-Mention, Low Impact): "Your brand is co-mentioned with [Industry Publication] in 78% of shared AI responses. Strengthening this association through guest content or backlinks could improve citation rates."

You accept Recommendations 1 and 2, dismiss Recommendation 4 as low priority, and mark Recommendation 3 for later. Over the next two weeks, you publish the comparison page and add schema markup. You mark both as complete.

Two weeks later. Your Impact Report shows the comparison page drove a 9-point visibility increase on ChatGPT for that query cluster. The schema fix improved your citation rate on Google AI Overviews by 14%. Real numbers. Real proof that the work mattered.

How This Compares to What's Out There

Most AI visibility tools stop at monitoring. They show you charts, maybe send you an alert when something changes, and leave you to figure out the rest. A few competitors offer "recommendations," but they're generic tips -- "optimize your content for AI search" -- not specific actions derived from your actual data.

Here's how AI Sightline's recommendation engine stacks up:

Feature | AI Sightline | Otterly | Peec | Semrush |

|---|---|---|---|---|

AI visibility monitoring | 6 platforms | 6 platforms | 5 platforms | 1 platform |

Data-driven recommendations | Yes, 4 types | No | Generic tips | No |

Impact tracking | Yes, est. vs actual | No | No | No |

API access | From Starter ($29.95/mo) | No | No | Enterprise only |

MCP server for AI agents | Yes (30 tools) | No | No | No |

Price for 75 prompts | $149.95/mo (Pro) | ~$150/mo | ~$99/mo | $99+/mo add-on |

The gap isn't subtle. Other tools tell you there's a problem. AI Sightline tells you the problem, tells you the fix, and tells you whether the fix worked. That's the difference between a dashboard and a coach.

Who Gets Recommendations (And at What Tier)

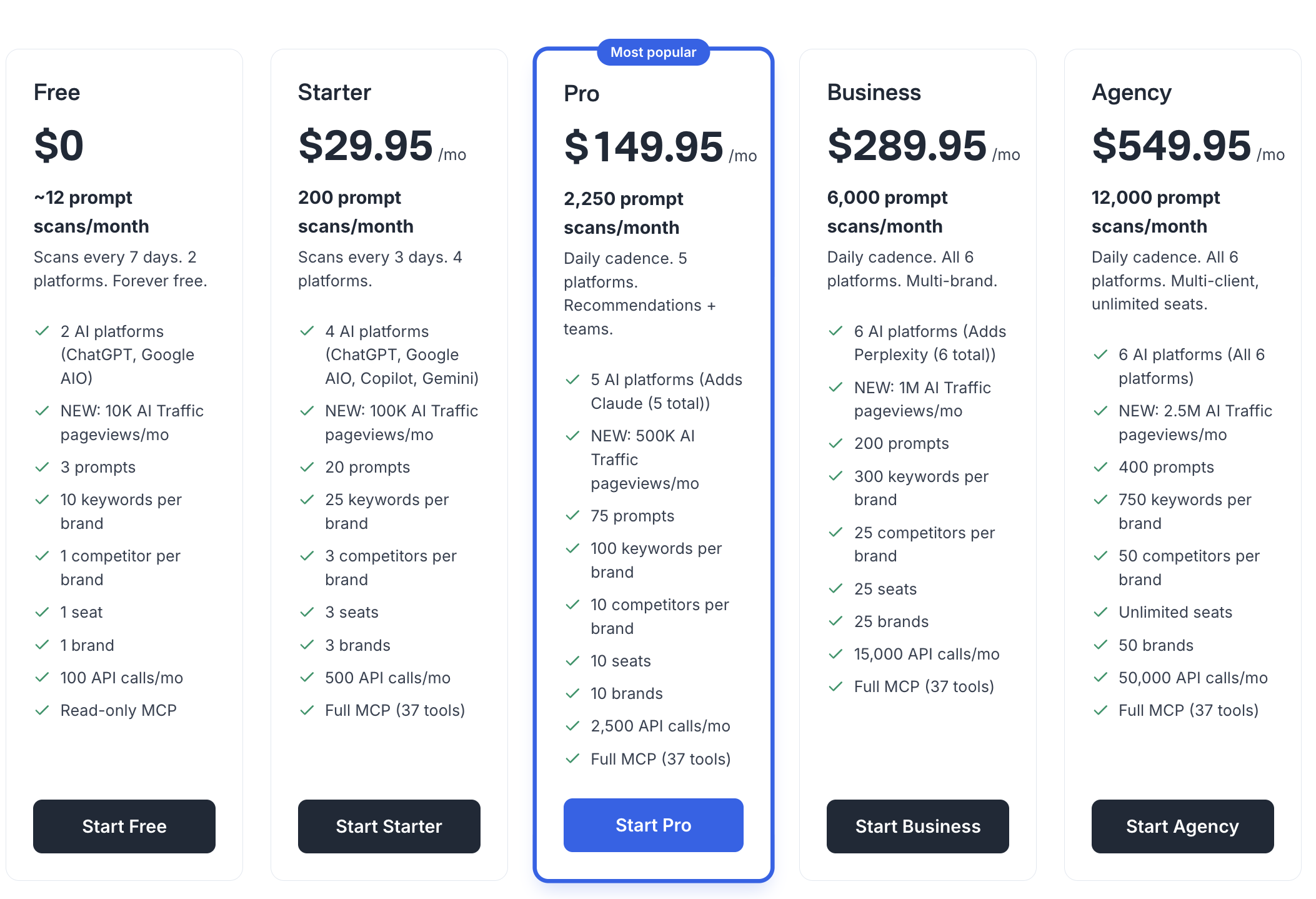

AI visibility recommendations are available on every AI Sightline plan, scaled by your usage tier:

Free ($0/mo): 2 suggestions per month across 2 platforms and 3 prompts. Enough to see the engine in action and understand what kind of recommendations you'd get with more data.

Starter ($29.95/mo): 10 suggestions per month across 4 platforms and 20 prompts. Good for freelancers and small brands starting to take AI visibility seriously.

Pro ($149.95/mo): 75 suggestions per month across 5 platforms and 75 prompts. This is the sweet spot -- enough data for the recommendation engine to identify patterns, enough suggestions to build a real optimization workflow. Full MCP access means your AI agents can query recommendations programmatically.

Business ($289.95/mo): 300 suggestions per month across all 6 platforms with 200 prompts. Content generation and auto-schema generation included. For teams running AI visibility as a core channel.

Agency ($549.95/mo): 750 suggestions per month. 400 prompts across all 6 platforms. Everything in Business plus the scale for agencies and large brands managing AI visibility across multiple properties.

Every paid tier includes the Impact Report. See the full pricing breakdown here.

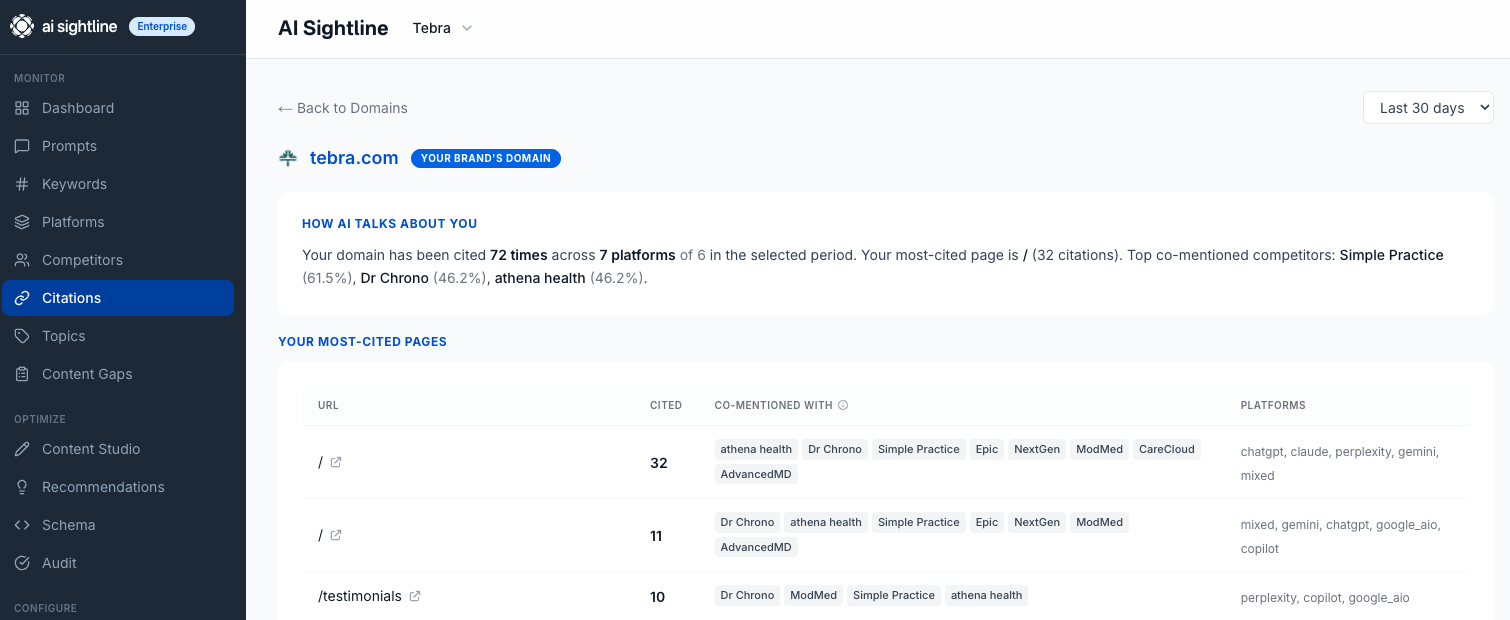

The "How AI Talks About You" View

We also shipped something we're calling the Own Domain Perception view. When you click into your own domain in the citation leaderboard, instead of the standard action-oriented layout, you see a perception-focused experience.

It answers the question: "How do AI platforms actually talk about my brand?"

You see which AI responses mention you, what context they mention you in, which other brands you're consistently co-mentioned with, and whether the mentions are positive, neutral, or factual. It's like reading your own reviews -- except the reviewer is ChatGPT, and the audience is everyone who asks it about your category.

This pairs with the recommendation engine. If the perception view shows AI platforms consistently describe you as "a smaller alternative to [Competitor]," the engine might generate a content gap recommendation to publish authoritative content that positions you differently.

Citation Taxonomy: Know Where Your Mentions Come From

Under the hood, we also rebuilt how citations are classified. Every citation in your AI Sightline dashboard now falls into one of three categories:

Own Domain. These are citations of your own website. When ChatGPT links to your blog post or Perplexity cites your documentation, that's an own-domain citation. This is the metric most brands care about most.

Third-Party Earned. These are citations from authoritative sources -- news publications, industry blogs, review sites, directories -- that mention your brand. If Forbes writes about your product and Perplexity cites that Forbes article in response to a query about your category, that's an earned citation. High value, hard to get.

Third-Party Unearned. Everything else. Competitor pages, random forums, generic aggregator sites. These citations exist but don't carry the same weight.

The recommendation engine uses this taxonomy. A content gap recommendation hits differently when you can see that competitors have 12 earned citations from industry publications and you have 2. It turns an abstract "you need more visibility" into a concrete "you need to earn coverage from these types of sources."

Getting Started Takes 30 Seconds

If you're already an AI Sightline user, recommendations are live right now. Open your dashboard -- you'll see the Recommendations card. Click through to the full Recommendations page to see your prioritized list, or dive into a specific recommendation to see the details and take action.

If you're new, start with a free account. You'll get 3 prompts, 2 platforms, and 2 suggestions per month. It's enough to see whether AI platforms are mentioning your brand, what they're saying, and what you should do about it.

The "what do I do about this?" era of AI visibility is over. Now you know exactly what to do, and you can prove it worked.

See your AI visibility recommendations -- free, no credit card required.

How do I build an AI visibility action plan?

Four steps. One, baseline your visibility across the six major AI platforms. Two, identify the prompts where your competitors appear and you do not. Three, ship content and schema fixes targeting those gaps. Four, re-scan and measure the delta. Repeat monthly until you outrank the category leader in your topic clusters.

What is the first move that actually shifts the score?

Add structured data and a tight FAQ block to your top five pages. Schema gives AI engines a clean entity graph to cite, and FAQs map cleanly to the conversational queries assistants are asked. Most teams see a measurable score lift within one scan cycle.

How long does it take to see results?

Schema and on-page changes show up in AI assistant responses within one to three weeks once the bots re-crawl. Backlinks and topical authority moves take longer, typically four to eight weeks for the citation pattern to shift. Expect the first measurable score change inside a month.

What is a realistic monthly visibility goal?

For a brand starting near zero, aim to move from missing on all six platforms to passing-quality citation on at least three within 60 days. For an established brand defending share of voice, hold within five points of the category leader. Set the goal against your specific cluster, not the category average.

Get your free AI visibility score.

See how ChatGPT, Claude, Perplexity, Gemini, Google AIO, and Copilot talk about your brand.

Start freeSolo founder building AI visibility monitoring. Ships weekly. No venture capital, a lot of opinions about where AI search is going.